There seems to be some confusion over what the HoloLens’s depth sensor does, on the one hand, and what the HoloLens’s waveguides do, on the other. Ultimately the HoloLens HPU fuses these two two streams into a single image, but understanding the component pieces separately are essential for creating good holographic UX.

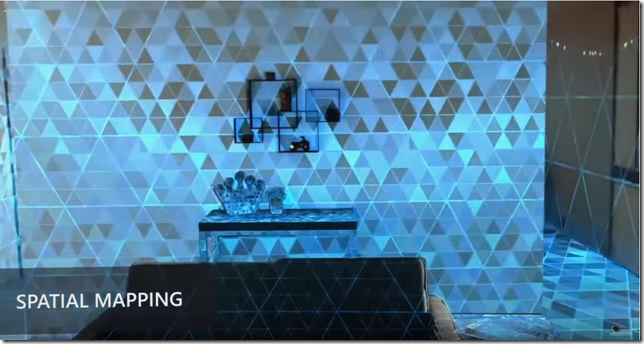

From what I can gather (though I could be wrong), HoloLens uses a single depth camera similar to the Kinect to perform spatial mapping of the surfaces around a HoloLens user. If you are coming from Kinect development, this is the same thing as surface reconstruction with that device. Different surfaces in the room are scanned by the depth cameras. Multiple passes of the scan are stitched together and merged over time (even as you walk through the room) to create a 3D mesh of the many planes in the room.

These surfaces can then be used to provide collision detection information for 3D models (holograms) in your HoloLens application. The range of the depth spatial mapping cameras is 0.85 meters to 3.1 meters. This means that if a user wants to include a surface that is closer than 0.85 M in their HoloLens experience, they will need to lean back.

The functioning of the depth camera shouldn’t be confused with the functioning of the HoloLens’s four “environment aware” cameras. These cameras are used to help in nailing down the orientation of the HoloLens headset in what is known as inside-out positional tracking. You can read more about that in How HoloLens Sensors Work. It is probably the case that the depth camera is used for finger tracking while the 4 environment aware cameras are devoted to spatial mapping.

The depth camera spatial mapping in effect creates a background context for the virtual objects created by your application. These holograms are the foreground elements.

Another way to make the distinction based on technical functionality rather than on the user experience is to think of the depth camera surface reconstruction data as input , and holograms as output. The camera is a highly evolved version of the keyboard while the waveguide displays are modern CRT monitors.

It has been misreported that the minimum distance for virtual objects in HoloLens is also 0.85 Meters. This is not so.

The minimum range for hologram placement is more like 10 centimeters. The optimal range for hologram placement, however, is 1.25 m to 5 m. In UWP, the ideal range for placing a 2D app in 3D holographic space is 2 m.

Microsoft also discusses another range they call the comfort zone for holograms. This is the range where vergence-accommodation mismatch doesn’t occur (one of the causes of VR sickness for some people). The comfort zone extends from 1 m to infinity.

| Range Name | Minimum Distance | Maximum Distance |

| Depth Camera | 0.85 m (2.8 ft) | 3.1 m (10 ft) |

| Hologram Placement | 0.1 m (4 inches) | infinity |

| Optimal Zone | 1.25 m (4 ft) | 5 m (16 ft) |

| Comfort Zone | 1.0 m (3 ft) | infinity |

The most interesting zone, right now, is of course that short range inside of 1 meter. That 1 meter min comfort distance basically prevents any direct interactions between the user’s arms and holograms. The current documentation even says:

Assume that a user will not be able to touch your holograms and consider ways of gracefully clipping or fading out your content when it gets too close so as not to jar the user into an unexpected experience.

When a user sees a hologram, they will naturally want to get a closer look and inspect the details. When a human being looks at something up close, he typically wants to reach out and touch it.

Tactile reassurance is hardwired into our brains and is a major tool for human beings to interact with the world. Punting on this interaction, as the HoloLens documentation does, is a great way to avoid this basic psychological need in the early days of designing for augmented reality.

We can pretend for now that AR interactions are going to be analogs to mouse click (air-tap) and touch-less display interactions. Eventually, though, that 1 meter range will become a major UX problem for everyone.

[Updated 4/6 after realizing I probably have the functioning of the environment aware cameras and the depth camera reversed.]

[Updated 4/21 – nope. Had it right the first time.]