There’s been a lot of debate concerning how the HoloLens display technology works. Some of the best discussions have been on reddit/hololens but really great discussions can be found all over the web. The hardest problem in combing through all this information is that people come to the question at different levels of detail. A second problem is that there is a lot of guessing involved and the amount of guessing going on isn’t always explained. I’d like to correct that by providing a layered explanation of how the HoloLens displays work and by being very up front that this is all guesswork. I am a Microsoft MVP in the Kinect for Windows program but do not really have any insider information about HoloLens I can share and do not in any way speak for Microsoft or the HoloLens team. My guesses are really about as good as the next guy’s.

High Level Explanation

The HoloLens display is basically a set of transparent screens placed just in front of the eyes. Each eyepiece or screen lets light through and also shows digital content the way your monitor does. Each screen shows a slightly different image to create a stereoscopic illusion like the View Master toy does or 3D glasses do at 3D movies.

A few years ago I worked with transparent screens created by Samsung that were basically just LCD screens with their backings removed. LCDs work by suspending liquid crystals between layers of glass. There are two factors that make them bad candidates for augmented reality head mounts. First, they require soft backlighting in order to be reasonably useful. Second, and more importantly, they are too thick.

At this level of granularity, we can say that HoloLens works by using a light-weight material that displays color images while at the same time letting light through the displays. For fun, let’s call this sort of display an augmented reality combiner, since it combines the light from digital images with the light from the real world passing through it.

Intermediate Level Explanation

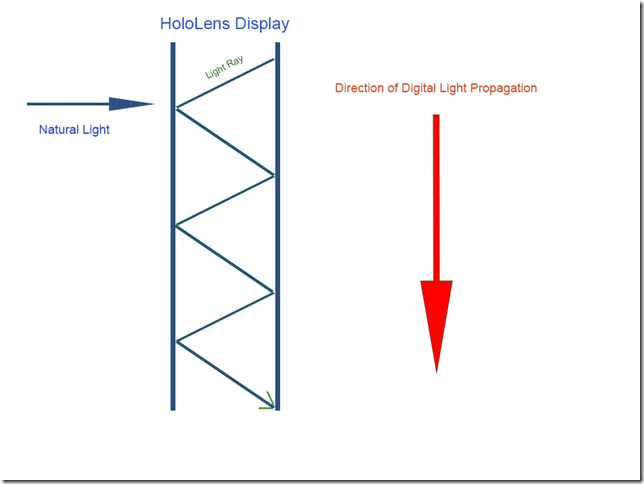

Light from the real world passes through two transparent pieces of plastic. That part is pretty easy to understand. But how does the digital content get onto those pieces of plastic?

The magic concept here is that the displays are waveguides. Optical fiber is an instance of a waveguide we are all familiar with. Optical fiber is a great method for transferring data over long distances because is is lossless, bouncing light back and forth between its reflective internal surfaces.

The two HoloLens eye screens are basically flat optical fibers or planar waveguides. Some sort of image source at one end of these screens sends out RGB data along the length of the transparent displays. We’ll call this the image former. This light bounces around the internal front and back of each display and in this manner traverses down its length. These light rays eventually get extracted from the displays and make their way to your pupils. If you examine the image of the disassembled HoloLens at the top, it should be apparent that the image former is somewhere above where the bridge of your nose would go.

Low Level Explanation

The lowest level is where much of the controversy comes in. In fact, it’s such a low level that many people don’t realize it’s there. And when I think about it, I pretty much feel like I’m repeating dialog from a Star Trek episode about dilithium crystals and quantum phase converters. I don’t really understand this stuff. I just think I do.

In the field of augmented reality, there are two main techniques for extracting light from a waveguide: holographic extraction and diffractive extraction. A holographic optical element has holograms inside the waveguide which route light into and out of the waveguide. Two holograms can be used at either end of the microdisplay: one turns the originating image 90 degrees from the source and sends it down the length of the waveguide. Another intercepts the light rays and turns them another ninety degrees toward the wearer’s pupils.

A company called TruLife Optics produces these types of displays and has a great FAQ to explain how they work. Many people, including Oliver Kreylos who has written quite a bit on the subject, believe that this is how the HoloLens microdisplays work. One reason for this is Microsoft’s emphasis on the terms “hologram” and “holographic” to describe their technology.

On the other hand, diffractive extraction is a technique pioneered by researchers at Nokia – for which Microsoft currently owns the patents and research. Due to a variety of reasons, this technique falls under the semantic umbrella of a related technology called Exit Pupil Expansion. EPE literally means making an image bigger (expanding it) so it covers as much of the exit pupil as possible, which means your eye plus every area your pupil might go to as you rotate your eyeball to take in your field of view (about a 10mm x 8mm rectangle or eye box). This, in turn, is probably why measuring the interpupillary distance is a large aspect of fitting people for the HoloLens.

Nanometer wide structures or gratings are placed on the surface of the waveguide at the location where we want to extract an image. The grating effectively creates an interference pattern that diffracts the light out and even enlarges the image. This is known as SRG or surface relief grating as shown in the above image from holographix.com.

Reasons for believing HoloLens is using SRG as its way of doing EPE include the Nokia connection as well as this post from Jonathan Lewis, the CEO of TruLife, in which Lewis states following the original HoloLens announcement that it isn’t the holographic technology he’s familiar with and is probably EPE. There’s also the second edition of Woodrow Barfield’s Wearable Computers and Augmented Reality in which Barfield seems pretty adamant that diffractive extraction is used in HoloLens. Being a professor at the University of Washington, which has a very good technology program as well as close ties to Microsoft, he may know something about it.

On the other hand, it doesn’t get favored or disfavored in this Microsoft patent clearly talking about HoloLens that ends up discussing both volume holograms (VH) as well as surface relief grating (SRG). I think HL is more likely to be using diffractive extraction rather than holographic extraction, but it’s by no means a sure thing.

Impact oN Field of View

An important aspect of these two technologies is that they both involve a limited field of view based on the ways we are bouncing and bending light in order to extract it from the waveguides. As Oliver Kreylos has eloquently pointed out, “the current FoV is a physical (or, rather, optical) limitation instead of a performance one.” In other words, any augmented reality head mounted display (HMD) or near eye display (NED) is going to suffer from a small field of view when compared to virtual reality devices. This is equally true of the currently announced devices like HoloLens and Magic Leap, the currently available AR devices like those by Vuzix and DigiLens, and the expected but unannounced devices from Google, Facebook and Amazon. Let’s call this the keyhole problem (KP).

The limitations posed by KP are a direct result of the need to use transparent displays that are actually wearable. Given this, I think it is going to be a waste of time to lament the fact that AR FOVs are smaller than we have been led to expect from the movies we watch. I know Iron Man has already had much better AR for several years with a 360 degree field of view but hey, he’s a superhero and he lives in a comic book world and the physical limitations of our world don’t apply to him.

Instead of worrying that tech companies for some reason are refusing to give us better augmented reality, it probably makes more sense to simply embrace the laws of physics and recognize that, as we’ve been told repeatedly, hard AR is still several years away and there are many technological breakthroughs still needed to get us there (let’s say five years or even “in the Windows 10 timeframe”).

In the meantime, we are being treated to first generation AR devices with all that the term “first generation” entails. This is really just as well because it’s going to take us a lot of time to figure out what we want to do with AR gear, when we get beyond the initial romantic phase, and a longer amount of time to figure out how to do these experiences well. After all, that’s where the real fun comes in. We get to take the next couple of years to plan out what kinds of experiences we are going to create for our brave new augmented world.

Great article, I finally understand why Hololens has such a small FOV.

Thank you!