In an ideal world, the resume is an advertisement for our capabilities and the interview process is an audit of those claims. Many factors have contributed to complicating what should be a simple process.

The first is the rise of professional IT recruiters and the automation of the resume process. Recruiters bring a lot to the game, offering a wider selection of IT job candidates to hiring companies, on the one hand, and providing a wider selection of jobs to job hunters, on the other. Automation requires standardization, however, and this has led to an overuse of key search terms when matching candidates to positions. The process begins with job specs from the hiring company — which parenthetically often have little to do with the actual job itself and highlights the frequent disconnect between IT departments and HR departments. A naive job hunter would try to describe their actual experience, which typically will not match the job spec as written by HR. At this point the recruiter helps the job hunter modify the details of her resume to match the template provided by the hiring company by injecting and prioritizing key buzzwords into the resume. “I’m sorry but Lolita, Inc will never hire you unless you have synesthesia listed in your job history. You do have experience with synesthesia, don’t you?”

All of this gerrymandering is required in order to get to the next step, the job interview. Unfortunately, the people doing the job interview have little confidence in the resume as a vehicle for accurately describing a candidate’s actually abilities. First of all, they know that recruiters have already gone over it to eliminate useful information and replace it with keywords instead. Next, the interviewers typically haven’t actually seen the HR job specs and do not understand what kind of role they are hiring for. Finally, none of the interviewers have any particular training in doing job interviews or any particular skill in ascertaining what a candidate knows. In short, the interviewer doesn’t know what he’s looking for and wouldn’t know how to get it if he did.

A savvy interviewer will probably realize that he is looking for the sort of generalist that Joel Spolsky describes as “smart and gets things done,” but how do you interview for that? The tools the interviewer is provided with are not generic but instead highly specific technology skills. At some point, this impedance mismatch between technology specific interview questions on the one had and a desire to hire generalists on the other (technology, after all, simply changes too quickly to look for only one skillset) lead to an increased reliance on behavioral questions and eventually Google-style language games. Neither of these, it turns, out, particularly help in hiring good candidates.

Once we historically severed any attempt to match interview questions to actual skills, the IT interview process was allowed to become a free floating hermeneutic exercise. Abstruse but non-specific questions involving principles and design patterns have taken over the process. This has led to two strange outcomes. On the one hand, job applicants are now required to be fluent in technical information they will never actually use in their jobs. Literary awareness of ten year old blog posts by Martin Fowler are more important than actually knowing how to get things done. And if the job interviewer exhibits any self-awareness when he turns down a candidate for not being clear on the justified uses of the CQRS pattern (there are none), it will not be because the candidate didn’t know something important for the job but rather because the candidate was unwilling to play the software architecture language game, and anyone unwilling to play the game is likely going to be a poor cultural fit.

The other consequence of an increased reliance on abstruse and non-essential IT knowledge has been the rise of the Architect across the industry. The IT industry has created a class of software developers who cannot actually develop software but instead specialize in telling other people what is wrong with their code. The architect is a specialization that probably indicates a deviant phase in the software industry – but at the same time it is a natural outcome of our IT job spec – resume – interview process. The skills of a modern software architect – knowledge of abstruse information and jargon often combined with an inability to get things done – is what we currently look for through our IT cargo cult hiring rituals.

This distinction between the ritual of IT hiring and the actual goals of IT hiring become most apparent when we look for specific as opposed to generalist skills. We hire generalists to be on staff over a long period. We hire specialists to perform difficult but real tasks that can eventually be handed over to our generalists – when we need to get something specific done.

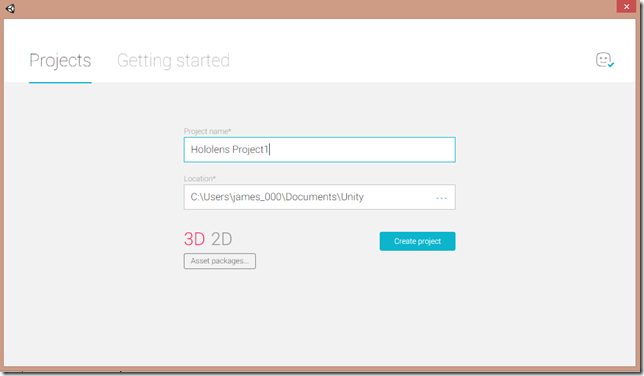

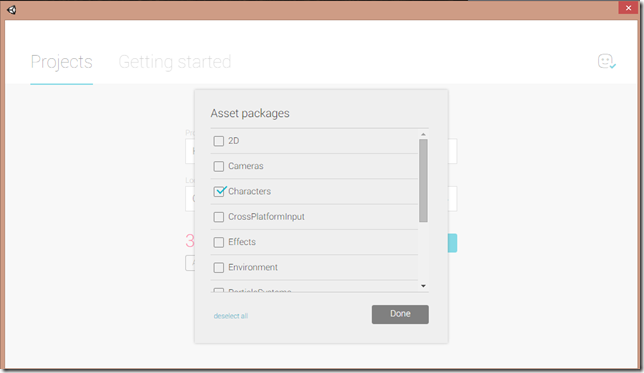

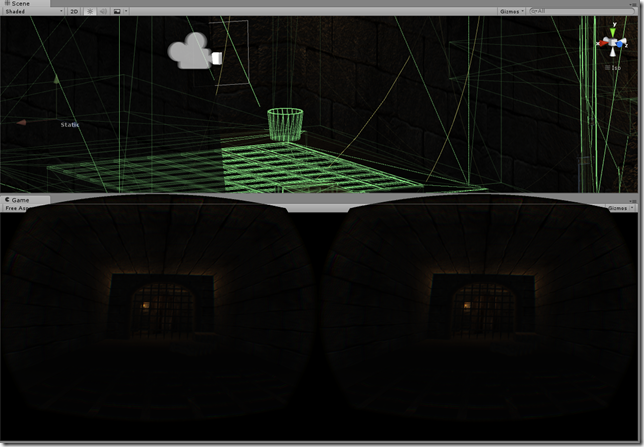

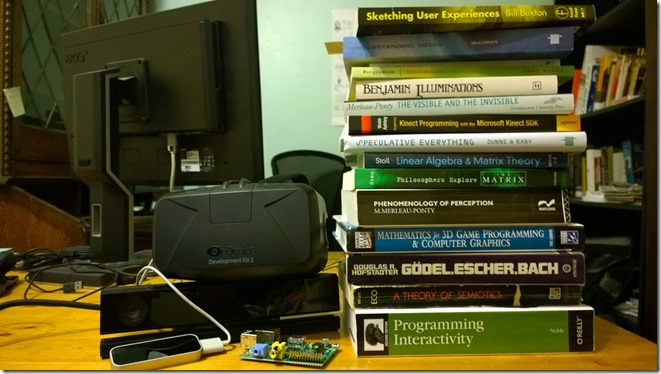

Which gets us to the point of this post. What are the skills we should look for when hiring for a HoloLens developer? And what are the skills a HoloLens developer should be highlighting on her resume?

At this point in time, when there is still no SDK generally available for the HoloLens and all HoloLens coders are working for Microsoft and under various NDAs, it is hard to say. Fortunately, important clues have been provided by the recent announcement of the first consulting agency dedicated to the HoloLens and co-founded by someone who has been working on HoloLens applications for Microsoft over the past year. The company Object Theory was just started by Michael Hoffman and Raven Zachary and they threw up a website to advertise this new venture.

Among the tasks involved in creating this sort of extremely specialized website is explaining what capabilities you offer. First, they offer experience since Hoffman has worked on several of the demos that Microsoft has been exhibiting at conferences and in promotional videos. But is this enough of a differentiator? What skills do they have to offer to a company looking to build a HoloLens application?

This is part of the fascination of their “Work” page. It cannot describe any actual work since the company just started and hasn’t technically done any technical work. Instead, it provides a list of capabilities that look amazingly like resume keywords – but different from any keywords you may have come across:

-

-

-

-

- Entirely new Natural User Interfaces (NUI)

- Surface reconstruction and object persistence

- 3D Spatial HRTF audio

- Mesh reduction, culling and optimization

- Baked shadows and ambient occlusion

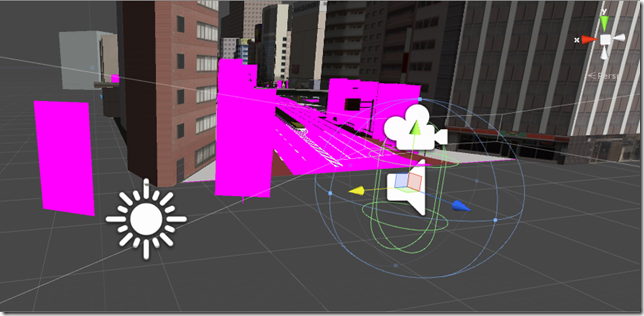

- UV mapping

- Optimized render shaders

- Efficient WiFi connectivity to back-end services

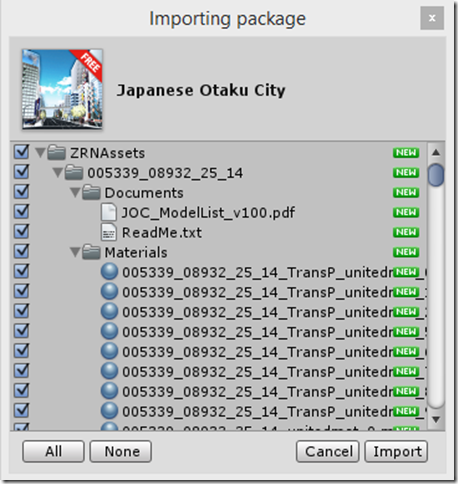

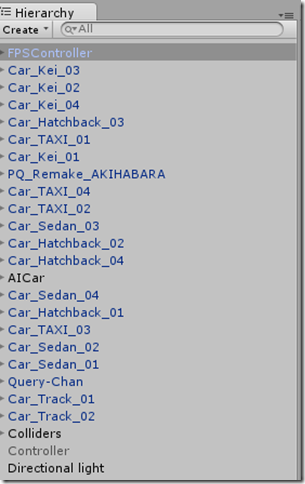

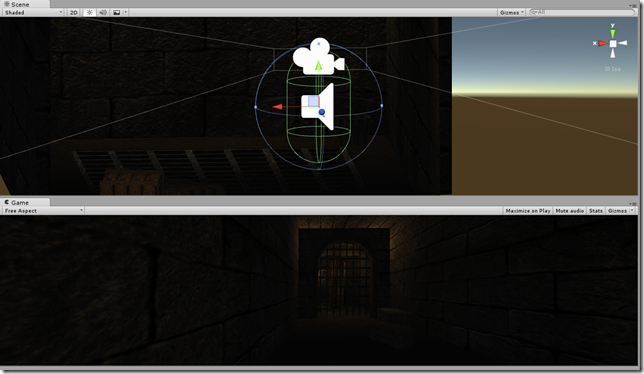

- Unity and DirectX

- Windows 10 APIs

-

-

-

These, in fact, are probably the sorts of skills you should be putting on your resume – or learning about in order to put on your resume – if getting a job programming HoloLens is your goal.

The verso side of this coin is that the list can also be turned into a great set of interview questions for someone thinking of hiring for HoloLens development, for instance:

Explain the concept of NUI to me.

Tell me about your experience with surface reconstruction and object persistence.

What is 3D spatial HRTF audio and why is it important for engineering HoloLens apps?

What are mesh reduction, mesh culling and mesh optimization?

Do you know anything about baked shadows and ambient occlusion?

Describe how you would go about performing UV mapping.

What are optimized render shaders and when would you need them?

How does the HoloLens communicate with external services such as a database?

What are the advantages and disadvantages of developing in Unity vs DirectX?

Describe the Windows 10 APIs that are used in HoloLens application development.

Then again, maybe these questions are a bit too abstruse?