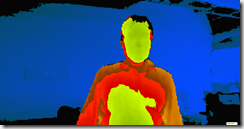

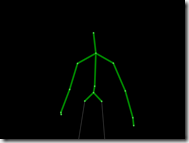

It’s true. In November, Microsoft will release the Kinect 2 for approximately $500. The new Kinect2 comes with HD video at 30 fps (we currently get 640×480 with the Kinect1), much improved skeleton tracking and improved audio tracking. One of the most significant changes is in depth tracking. Instead of the Primesense structured light technology used in Kinect1, Kinect2 uses the more traditional and more accurate time-of-flight technology. Since most Time of Flight depth cameras start at around $1K, getting this in a Kinect2 along with all the other features for half that price is pretty amazing.

But the deal doesn’t stop there. If you buy the Kinect for XBox, you automatically get an XBox for free! You actually can’t even buy the XBox on its own. You only can get it if you buy the Kinect2.

How do they give the new XBox One away for free you may ask? Apparently the price of the XBox One will be subsidized through game sales. Since the games for XBox will tend to have some sort of Kinect capability – enabled by the requirement that you can’t get the XBox on its own – the expectation seems to be that these unique games will get enough sales that, through volume, the cost of producing the XBox One will eventually be recouped.

But what if you aren’t interested in gaming? What if – like at my company, Razorfish – you are mainly interested in building commercial interfaces and artistic experiences with the Kinect technologies.

In this case, Microsoft will be providing another version of the Kinect (one assumes that it will be called something like Kinect2 for Windows or perhaps K4W2 – its Star Wars droid name) that has a USB 3 adapter that will plug into a PC. And because it is for people who are not interested in gaming, it will probably cost a bit less than $500 to make up for the fact that it doesn’t come with a free XBox One and won’t ever recoup that hardware cost from non-gamers. By the way, this version of the Kinect sensor will be released some time – perhaps months? — following the K4X1 November release.

Finally, to make the distinction between the two kinds of Kinect2s clear, the Kinect2 for XBox will not plug into a PC and Kinect2 for Windows will not plug into an XBox. It’s just cleaner that way.

With the original Kinect, there was quite a bit of confusion introduced by the fact that when it was released it used a typical USB connector that could be plugged into either the XBox 360 or a PC. This turned out to be a great thing for Microsoft because it set off an amazing flood of creativity among hackers who started building their own frameworks and drivers to read the USB data and then build applications on top of it.

Overnight, this grassroots Kinect Hacks movement made Microsoft cool again. There is currently talk going around that the USB connector on the Kinect was simply fortuitous. I’m pretty sure, however, that it was prescient – at least on someone’s part – and the intent was – again on someone’s part if not everyone’s – to provide the sort of platform that could be taken advantage of to build more than games.

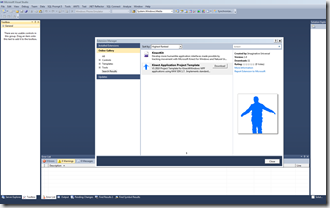

As Microsoft moved forward with the development of the Kinect SDK as a platform for developers to build Kinect applications on, they decided that this should be coupled with a special version of the “Kinect” called Kinect for Windows that would carry special firmware supporting near mode. Additionally, the commercial version of the hardware (which was pretty much the same as the the gaming version of the hardware) required a special dongle (see photo above) that would help regulate the power on PCs. The biggest difference between the two Kinects, however, was the licensing terms and the price. Basically, if you wanted to use Kinect technology commercially with the Kinect SDK, you needed to use the Kinect for Windows sensor which carried a higher, un-subsidized price.

This, naturally, caused a lot of confusion. People wondered why Microsoft was overcharging for the commercial version of the sensor when with a Copernican frame of mind that might have just as easily asked why Microsoft was undercharging for the gaming version of the sensor.

With the Kinect2 sensors, all of this confusion is removed by fiat since the gaming version and commercial version now have different connectors. From a hardware standpoint, rather than merely a legal one, you cannot use your gaming sensor with a PC.

Of course, you could also perform a Copernican revolution on my framing above and suggest that it isn’t the XBox One that is being subsidized through the purchase of the Kinect2 but rather the Kinect2 that is being subsidized through the purchase of the XBox One.

It’s all a bit of an accounting trick, isn’t it? Basically the money has to come from somewhere. Given that Microsoft received a lot of free, positive PR from the Kinect hacking movement, it would be cool if they gave a little back and made the non-gaming Kinect2 sensor more accessible.

Contrarily, however, it is already the case that a time-of-flight camera for under $500 along with all the other features loaded onto the Kinect2 is a pretty amazing deal for weekend coders, installation artists, and retailers.

In any case, it gives me peace of mind to think of the Kinect2 sensor as a $500 device that comes with a free XBox One. A lot of the angst I might otherwise feel about pricing simply melts away. Though if Microsoft felt like subsidizing the price of the K4W2 sensor with some of the excess money they make off of Sharepoint licenses, I’d be cool with that, too.