MapDepthFrameToColorFrame is a beautiful method introduced rather late into the Kinect SDK v1. As far as I know, it primarily has one purpose: to make background subtraction operations easier and more performant.

Background subtraction is a technique for removing any pixels in an image that are not the primary actors. Green Screening – which if you are old enough to have seen the original Star wars when it came out is known to you as Blue Screening – is a particular implementation of background subtraction in the movies which has actors performing in front of a green background. The green background is then subtracted from the final film and another background image is inserted in its place.

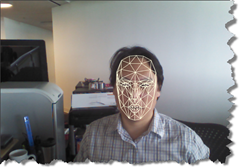

With the Kinect, background subtraction is accomplished by comparing the data streams rendered by the depth camera and the color camera. The depth camera will actually tell us which pixels of the depth image belong to a human being (with the pre-condition that Skeleton Tracking must be enabled for this to work). The pixels represented in the depth stream must then be compared to the pixels in the color stream in order to subtract out any pixels that do not belong to a player. The big trick is each pixel in the depth stream must be mapped to an equivalent pixel in the color stream in order to make this comparison possible.

I’m going to first show you how this was traditionally done (and by “traditionally” I really mean in a three to four month period before the SDK v1 was released) as well as a better way to do it. In both techniques, we are working with three images: the image encoded in the color stream, the image encoded in the depth stream, and the resultant “output” bitmap we are trying to reconstruct pixel by pixel.

The traditional technique goes through the depth stream pixel by pixel and tries to extrapolate that same pixel location in the color stream one at a time using the MapDepthToColorImagePoint method.

var pixelFormat = PixelFormats.Bgra32;

WriteableBitmap target = new WriteableBitmap(depthWidth

, depthHeight

, 96, 96

, pixelFormat

, null);

var targetRect = new System.Windows.Int32Rect(0, 0

, depthWidth

, depthHeight);

var outputBytesPerPixel = pixelFormat.BitsPerPixel / 8;

sensor.AllFramesReady += (s, e) =>

{

using (var depthFrame = e.OpenDepthImageFrame())

using (var colorFrame = e.OpenColorImageFrame())

{

if (depthFrame != null && colorFrame != null)

{

var depthBits =

new short[depthFrame.PixelDataLength];

depthFrame.CopyPixelDataTo(depthBits);

var colorBits =

new byte[colorFrame.PixelDataLength];

colorFrame.CopyPixelDataTo(colorBits);

int colorStride =

colorFrame.BytesPerPixel * colorFrame.Width;

byte[] output =

new byte[depthWidth * depthHeight

* outputBytesPerPixel];

int outputIndex = 0;

for (int depthY = 0; depthY < depthFrame.Height

; depthY++)

{

for (int depthX = 0; depthX < depthFrame.Width

; depthX++

, outputIndex += outputBytesPerPixel)

{

var depthIndex =

depthX + (depthY * depthFrame.Width);

var playerIndex =

depthBits[depthIndex] &

DepthImageFrame.PlayerIndexBitmask;

var colorPoint =

sensor.MapDepthToColorImagePoint(

depthFrame.Format

, depthX

, depthY

, depthBits[depthIndex]

, colorFrame.Format);

var colorPixelIndex = (colorPoint.X

* colorFrame.BytesPerPixel)

+ (colorPoint.Y * colorStride);

output[outputIndex] =

colorBits[colorPixelIndex + 0];

output[outputIndex + 1] =

colorBits[colorPixelIndex + 1];

output[outputIndex + 2] =

colorBits[colorPixelIndex + 2];

output[outputIndex + 3] =

playerIndex > 0 ? (byte)255 : (byte)0;

}

}

target.WritePixels(targetRect

, output

, depthFrame.Width * outputBytesPerPixel

, 0);

}

}

};

You’ll notice that we are traversing the depth image by going across pixel by pixel (the inner loop) and then down pixel row by pixel row (the outer loop). The pixel width of the bitmap, for reference, is known as its stride. Then inside the inner loop, we are mapping each depth pixel to its equivalent color pixel in the color stream by using the MapDepthToColorImagePoint method.

It turns out that these calls to MapDepthToColorImagePoint are rather expensive. It is much more efficient to simply create an array of ColorImagePoints and populate it in one go before doing any looping. This is exactly what MapDepthFrameToColorFrame does. The following example uses it in place of the iterative MapDepthToColorImagePoint method. It has an added advantage in that, instead of having to iterate through the depth stream column by column and row by row, I can simply go through the depth stream pixel by pixel, removing the need for nested loops.

var pixelFormat = PixelFormats.Bgra32;

WriteableBitmap target = new WriteableBitmap(depthWidth

, depthHeight

, 96, 96

, pixelFormat

, null);

var targetRect = new System.Windows.Int32Rect(0, 0

, depthWidth

, depthHeight);

var outputBytesPerPixel = pixelFormat.BitsPerPixel / 8;

sensor.AllFramesReady += (s, e) =>

{

using (var depthFrame = e.OpenDepthImageFrame())

using (var colorFrame = e.OpenColorImageFrame())

{

if (depthFrame != null && colorFrame != null)

{

var depthBits =

new short[depthFrame.PixelDataLength];

depthFrame.CopyPixelDataTo(depthBits);

var colorBits =

new byte[colorFrame.PixelDataLength];

colorFrame.CopyPixelDataTo(colorBits);

int colorStride =

colorFrame.BytesPerPixel * colorFrame.Width;

byte[] output =

new byte[depthWidth * depthHeight

* outputBytesPerPixel];

int outputIndex = 0;

var colorCoordinates =

new ColorImagePoint[depthFrame.PixelDataLength];

sensor.MapDepthFrameToColorFrame(depthFrame.Format

, depthBits

, colorFrame.Format

, colorCoordinates);

for (int depthIndex = 0;

depthIndex < depthBits.Length;

depthIndex++, outputIndex += outputBytesPerPixel)

{

var playerIndex = depthBits[depthIndex] &

DepthImageFrame.PlayerIndexBitmask;

var colorPoint = colorCoordinates[depthIndex];

var colorPixelIndex =

(colorPoint.X * colorFrame.BytesPerPixel) +

(colorPoint.Y * colorStride);

output[outputIndex] =

colorBits[colorPixelIndex + 0];

output[outputIndex + 1] =

colorBits[colorPixelIndex + 1];

output[outputIndex + 2] =

colorBits[colorPixelIndex + 2];

output[outputIndex + 3] =

playerIndex > 0 ? (byte)255 : (byte)0;

}

target.WritePixels(targetRect

, output

, depthFrame.Width * outputBytesPerPixel

, 0);

}

}

};