With the Mixed Reality Toolkit 2017.1.2, the Keyword Manager was finally retired, after being “obsolete” for the past several toolkit iterations.

As the toolkit matures, many key components are being refactored to make them more flexible and architecturally proper. The downside to this – and the source of much frustration – is that these refactors tend to upend what developers are used to doing. The Keyword Manager is a great example of this. It was one of the best Unity style interfaces for HoloLens / MR because it encapsulated a lot of complex code in a easy to use drag-and-drop style visual.

The challenge for those working on the toolkit – Stephen Hodgson, Neeraj Wadhwa, and all the others – is to refactor without too badly breaking the interface abstractions we’ve all gotten used to. For the KeywordManager refactor, this was accomplished by breaking the original component into two parts, a SpeechInputSource and a SpeechInputHandler.

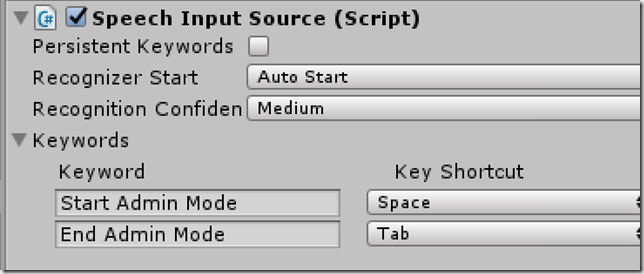

The SpeechInputSource lets you determine if speech recognition starts up automatically and the recognition confidence level (you want this higher if you are using ambiguous or short phrases like “Start” and “Stop”). The Persistent Keywords field lets you keep the same speech recognition phrases between different scenes in your app. Most important, though, is the Keywords list. This lets you add to a list of phrases you want to be recognized in your app.

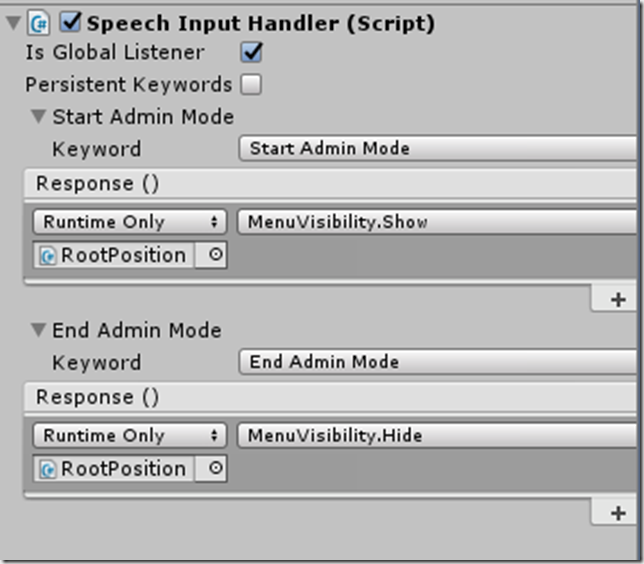

The SpeechInputHandler is the component that lets you determine what happens when a phrase is recognized (the response). You click on the plus icon to add a response, select the phrase that will be handled in the Keyword field, and then can drag and drop gameobjects into your response and select the script and method that is called.

The one thing you need to remember to do is the check off the Is Global Listener field if you want behavior similar to the old KeywordManager. This will listen for all speech commands all the time. If Is Global Listener is not selected, then only the SpeechInputHandler the user is gazing at will receive commands. This is really useful if you have multiple copies of the same object and only want to apply commands to a particular instance at a time.